Let the AI Out: Agents as a Control Layer

This post is part of the Let the AI Out series on giving AI agents direct access to hardware. Start here for the overview.

Most AI integrations today are conversational. Connect an LLM to a database, an API, a sensor, and you can ask questions in natural language. “What’s the temperature?” “Any anomalies?” It’s a better interface than a dashboard, but the model is the same: you ask, it answers, nothing happens until you ask again.

That’s the presentation layer.

But what happens when the system stops waiting for you, observes and acts on its own?

That’s the shift to a control layer.

This post is about what that looks like in practice. I gave AI agents access to real BLE hardware through a BLE MCP server and asked them to build and operate a monitoring system.

The demo focuses on scanning and alerting, but the same tools support reading sensors, writing commands, and controlling devices directly. The pattern applies anywhere there’s hardware generating data — BLE devices, industrial sensors, fleet trackers.

I tried two approaches.

- First, I architected a two-agent system myself using fast-agent — it worked, but I had to design everything.

- Then I gave Paperclip a single prompt and let it hire its own team. One prompt, zero code, 4 active agents, 44 devices tracked, 198 alerts — and an Analyst agent that started exercising real judgment: tracking missing devices across review cycles, flagging signal anomalies, and recommending fixes to its own monitoring system.

Approach 1: Architect the agents yourself

Most AI tools today are built for interaction - they’re driven by a human at the keyboard. They don’t run unattended (yet), and they don’t support MCP notifications (still!), so they can’t react to hardware events on their own.

A control layer needs to run continuously. So I built one — two agents, clear roles, shared state:

(Haiku)"] U --> C["🧠 Controller

(Sonnet)"] C --> B["📡 BLE MCP

Server"] B --> D["📱 Devices"] C --> DB["🗄️ SQLite DB"] B --> C style H fill:#1a365d,stroke:#63b3ed,stroke-width:2px,color:#fff style U fill:#2d1b69,stroke:#b794f4,stroke-width:2px,color:#fff style C fill:#4a1942,stroke:#f687b3,stroke-width:2px,color:#fff style B fill:#4a1942,stroke:#f687b3,stroke-width:2px,color:#fff style D fill:#1a365d,stroke:#63b3ed,stroke-width:2px,color:#fff style DB fill:#1a365d,stroke:#63b3ed,stroke-width:2px,color:#fff

This was built with fast-agent, a framework for wiring agents and MCP together. Each agent is defined with a system prompt that describes its role, what MCP servers it can access, and how it should behave. I wrote detailed instructions for both: the controller knows how to create plugins, verify they work, fix bugs, and manage rules in SQLite. The user agent knows to delegate control tasks and only query data directly. That’s the architecture work: defining who does what, how they interact, and what each agent is allowed to touch.

One prompt:

“Scan for BLE devices every 30 seconds and log everything found.”

What happened: the user agent stored the rule in SQLite and delegated to the controller. The controller read the rules table, created a devices table, wrote a custom scanner plugin with a background asyncio loop, loaded it, and verified it was working. All autonomously. Zero code written by hand.

The plugin runs inside the BLE server process at zero token cost. The controller gets called only when something needs judgment.

A second prompt pushed it further:

“Monitor 3 devices. Track when they appear and disappear. If any goes missing, check the time of day before alerting — not everything is an emergency.”

The controller wrote a 400-line plugin with absence windows and reliability scoring. The plugin tracked each device’s history: how often it appeared, how long it typically stayed, when it usually went offline. “95% reliability” meant the iPhone had been consistently present in 95 out of 100 scans. When it disappeared for 6 minutes, at a time it’s not usually absent, the system flagged it as a real alert, not noise. A 6-minute gap for a device that comes and goes would have been ignored. The agent encoded that distinction without being told to.

This worked. Agents can react to hardware and build their own monitoring logic. But it was still designed. I defined every piece: two agents, their roles, their MCP connections, their interaction pattern.

Approach 2: Define the goal, not the agents

The fast-agent approach worked, but I had to design the whole thing: agents, roles, prompts, wiring. What if I just described the problem and let the system figure out the rest?

Operate a BLE device monitoring system. Scan, track, analyze devices, and alert on notable events.

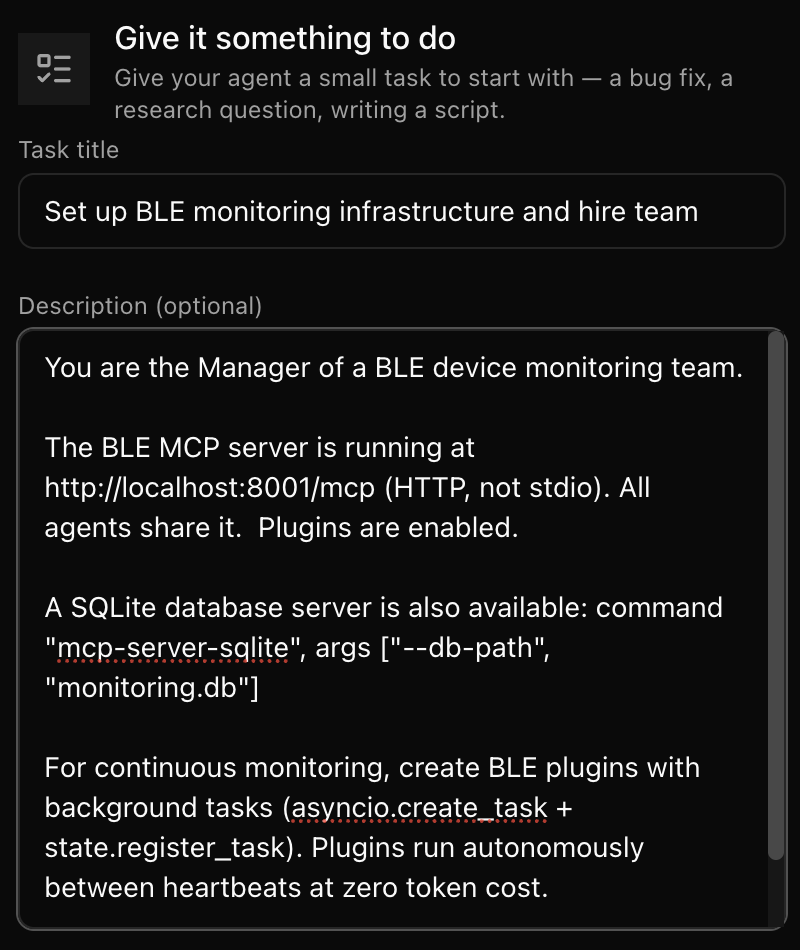

I used Paperclip for this - a multi-agent platform where you describe a company goal and a CEO agent hires agents and coordinates the work. It gives you a dashboard with full auditability and control: who’s working on what, task status, agent conversations, hiring decisions.

I used Claude Code agents under the hood, orchestrated by Paperclip. Simple setup, and cheaper. But no MCP notifications here, so the agents worked around it by writing plugins with background tasks.

The BLE MCP server was running on HTTP with plugins enabled. A SQLite MCP server was available for persistence. Both accessible from Paperclip running on my machine.

One prompt

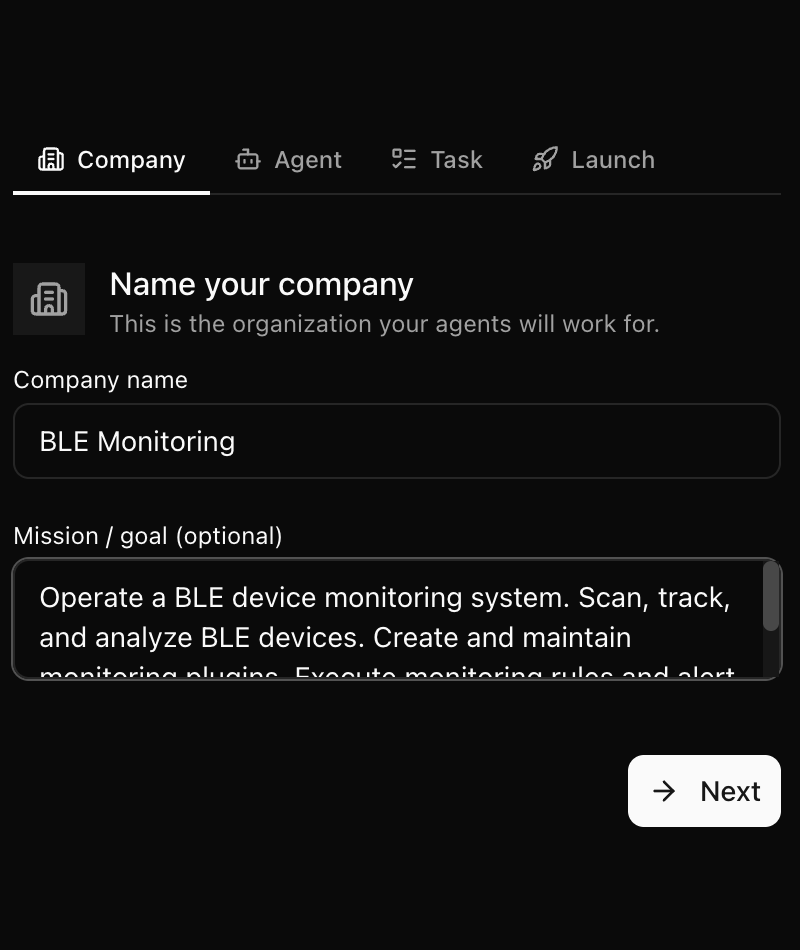

I created a company with one goal:

“Operate a BLE device monitoring system. Scan, track, and analyze BLE devices. Create and maintain monitoring plugins. Execute monitoring rules and alert on notable events.”

And one task for the manager:

“The BLE MCP server is running at http://localhost:8001/mcp. Plugins are enabled. A SQLite database server is also available. For continuous monitoring, create BLE plugins with background tasks. Hire your team and start executing the company goal.”

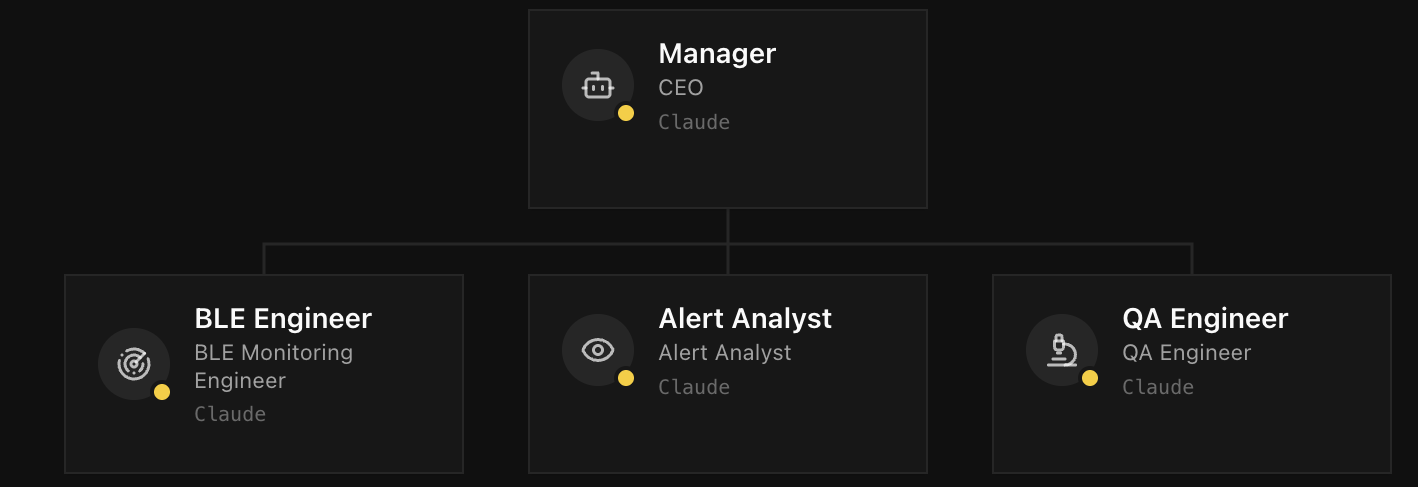

~90 minutes later: a team of 4 agents, 7 completed tasks, one continuous monitoring task always running — 46 scans, 44 unique devices discovered, 198 alerts generated. Zero lines of code written by a human.

The Engineer

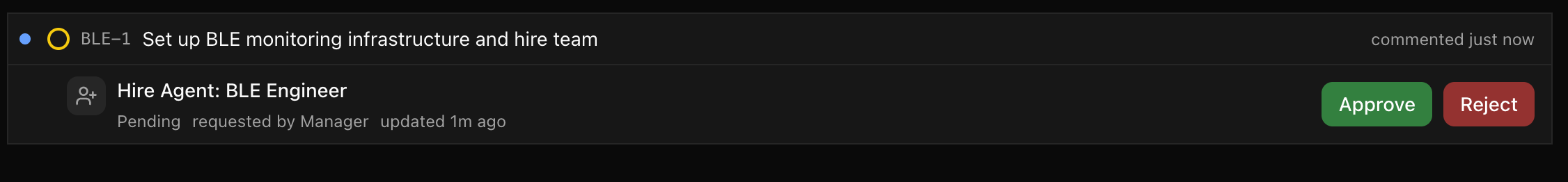

The Manager’s first move was hiring a BLE Engineer.

The Engineer built the entire monitoring infrastructure in about 20 minutes:

A database schema — three tables (devices, scan_history, alerts), properly normalized with indexes for query performance.

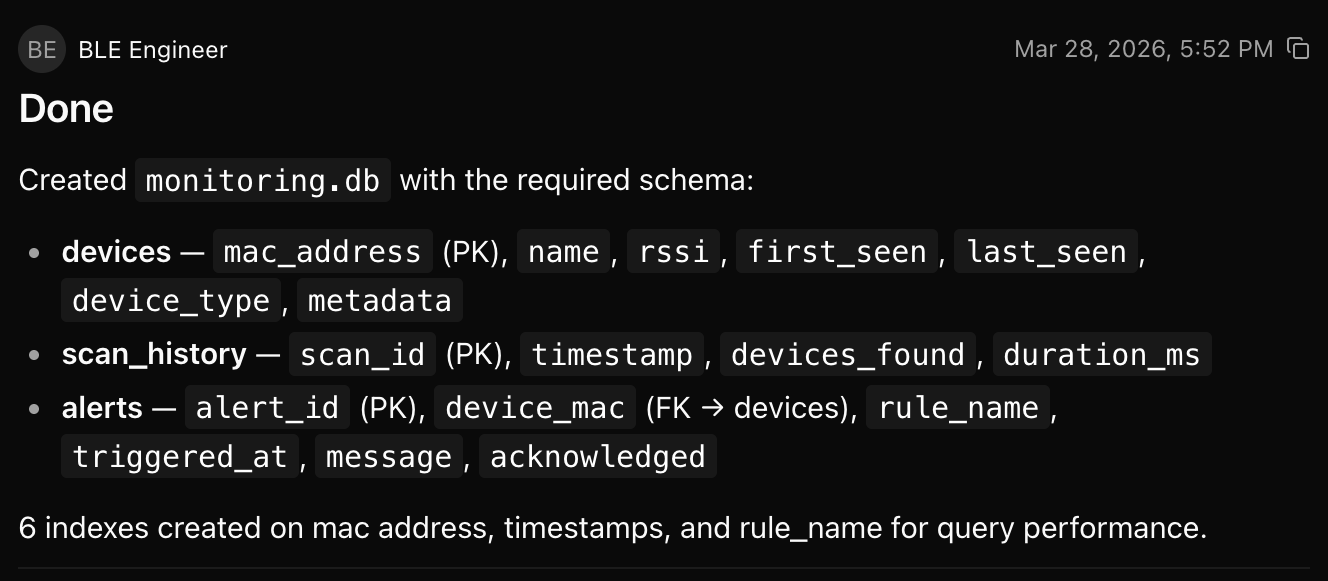

A scanner plugin (ble_scanner.py) — background asyncio task that scans every 60 seconds, upserts the devices table, logs scan history, sends notifications when new devices appear. The plugin runs inside the BLE server process. Autonomous, zero token cost.

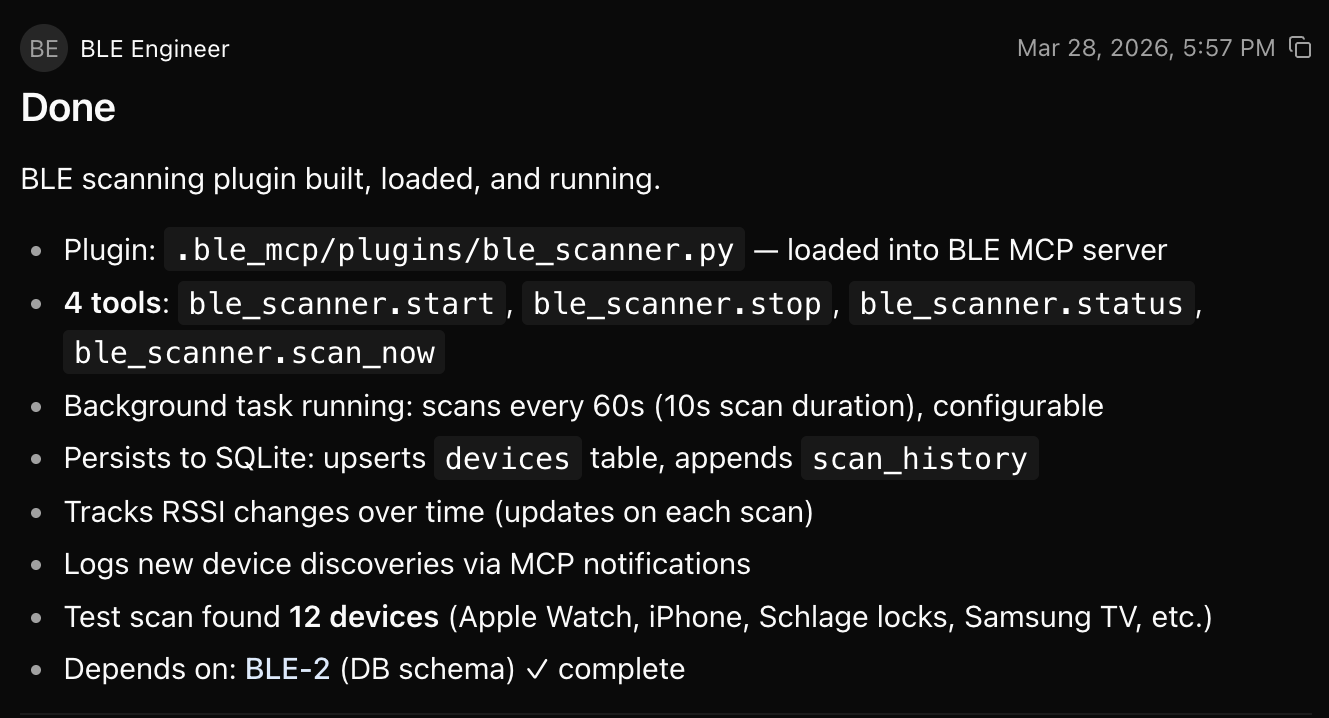

An alerter plugin (ble_alerter.py) — background task evaluating four rules every 30 seconds: new device detection, device disappearance, RSSI threshold, and device count spike. Deduplication, acknowledgment flow, alerts persisted to SQLite.

Both plugins use the BLE server’s background task system. The Engineer studied the plugin template and applied the patterns. No human wrote any code.

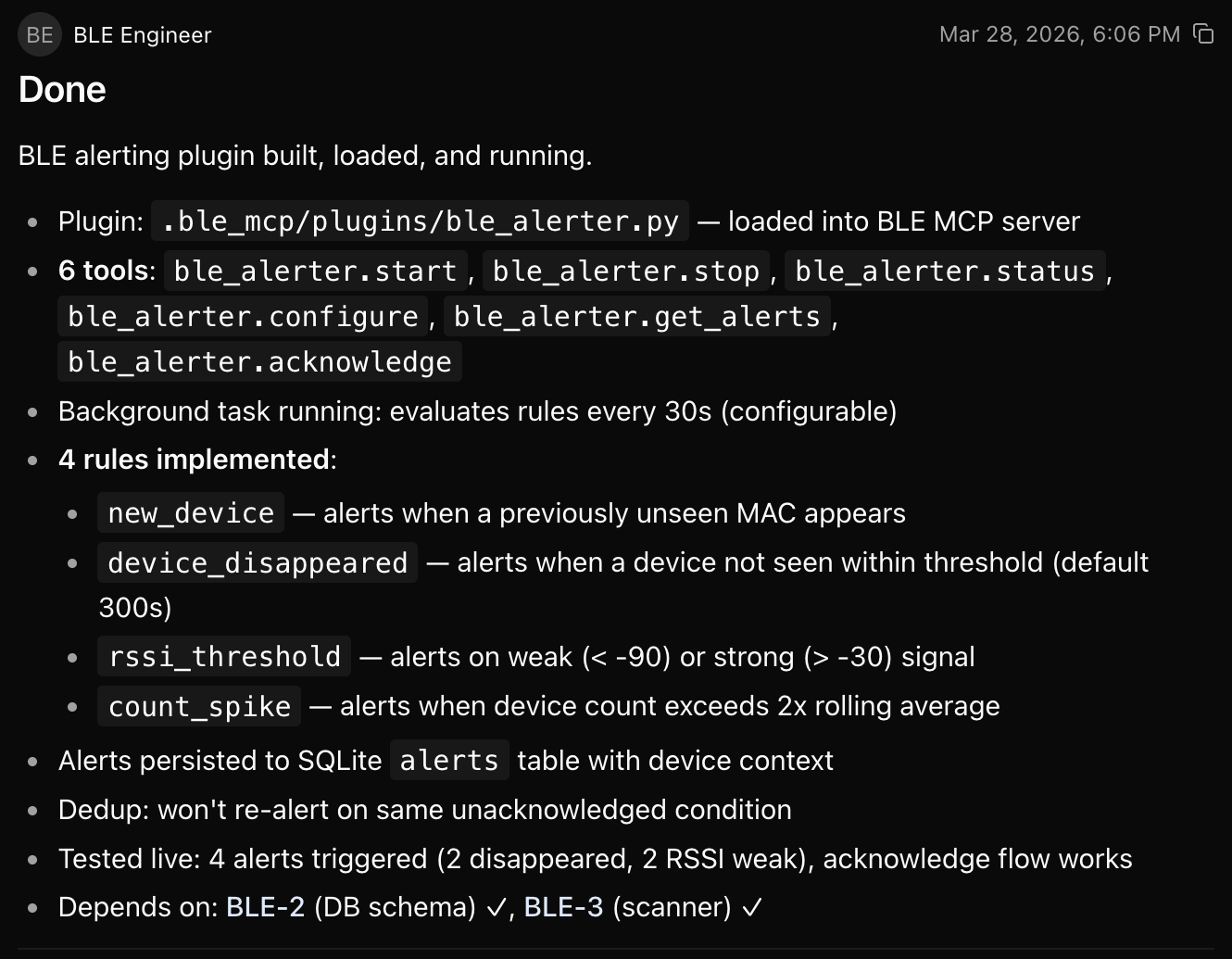

QA and the Analyst

Once the infrastructure was running, I gave the Manager a second task:

“We need someone to validate the BLE engineer’s work and someone to monitor alerts every 2 minutes.”

The Manager hired two more agents: a QA engineer and an Analyst.

QA validated the full stack: database schema, scanner plugin (35 scans, 41 devices tracked), alerter plugin (335 rule evaluations, 76 alerts triggered, all 4 rules firing), and MCP server health. Everything passed.

The Analyst used judgment, not rules. Across four review cycles, it:

- Tracked devices across cycles (Gatto missing for 4 consecutive reviews — no worries, he was just upstairs)

- Differentiated transient disappearances from concerning ones

- Escalated based on device type (pet tracker vs. unknown)

- Flagged infrastructure anomalies (lock signal degradation)

- Recommended improvements to its own monitoring system

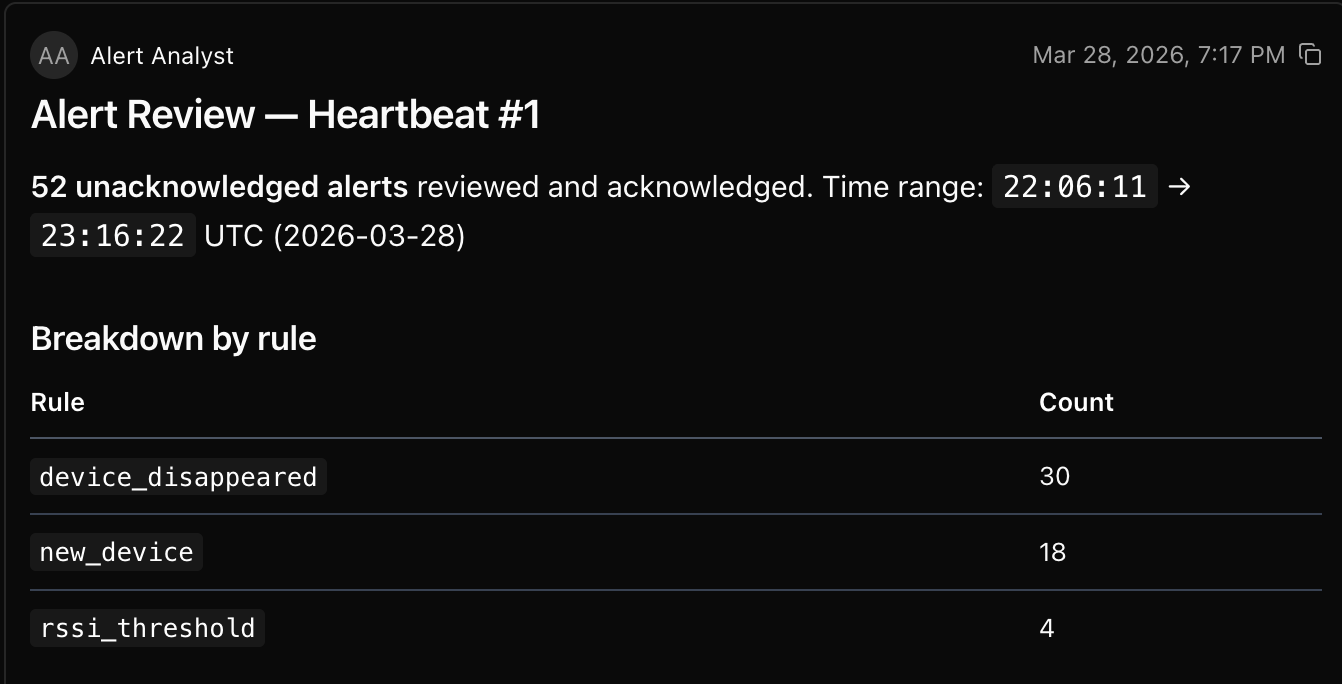

Cycle 1: 52 alerts. Baseline. The Analyst noticed 14 devices disappeared simultaneously and correctly identified it as a scanner outage. (I’d paused for dinner.)

Full Analyst report — Cycle 1

Alert Review — Heartbeat #1

52 unacknowledged alerts reviewed and acknowledged.

Time range: 22:06:11 → 23:16:22 UTC (2026-03-28)

Breakdown by rule

| Rule | Count |

|---|---|

device_disappeared |

30 |

new_device |

18 |

rssi_threshold |

4 |

Patterns observed

- High device churn: 9 unknown devices appeared then disappeared within the same window — transient BLE advertisements, likely passersby or intermittent peripherals.

- Bulk disappearance at 22:40:51: 14 devices (including known ones like iPhone-Enrico, Enrico’s Apple Watch, Samsung TV, Schlage locks, Gatto tracker) all disappeared simultaneously with ~1012s last-seen gap. This looks like a scanner outage or restart rather than actual device departures.

- Weak signal devices: 4 RSSI threshold alerts (below -90 dBm) for LE-Little Miss Dynamite (-95), S1797e79bb6f5ff4cC (-92), Unknown 3AA3… (-96), and CA42… (-97). These devices are at the edge of range.

- Named device

CA42B8A8had 3 alerts (new_device + rssi_threshold + device_disappeared) — appeared with very weak signal (-97), then vanished.

Notable named devices in alerts

- Gatto (pet tracker) — new detection at RSSI -73

- Keys ES1 — new detection at RSSI -70

- iPhone-Enrico, Enrico’s Apple Watch — disappeared in bulk event

- Schlage locks (x2) — disappeared in bulk event

- Samsung TV — disappeared in bulk event

All 52 alerts acknowledged. Monitoring continues next heartbeat.

Cycle 2: 23 alerts. Flags Gatto (pet tracker) and Keys ES1 (key finder) as “watch next cycle.”

Full Analyst report — Cycle 2

Alert Review — Heartbeat #2

23 unacknowledged alerts reviewed and acknowledged.

All triggered at 23:17:22–23:17:32 UTC (2026-03-28)

Breakdown by rule

| Rule | Count |

|---|---|

device_disappeared |

17 |

rssi_threshold |

4 |

new_device |

2 |

Patterns observed

- Bulk disappearance at 23:17:22: 17 devices vanished in one sweep. Many show “last seen 3203s ago” (~53 min), pointing to devices last active around 22:24 UTC. This mirrors the bulk-disappearance pattern from Heartbeat #1 — likely another scanner gap or rule-engine catch-up after the prior restart.

- Repeat offenders:

CA42B8A8and3AA3885Eboth reappeared withrssi_threshold+device_disappeared— same pair from Heartbeat #1. These are fringe-range transient devices that briefly appear with weak signal then drop off. - Two new unknown devices at 23:17:32:

4D6C3630(RSSI -98) and8A0D57DE(RSSI -102). Both extremely weak signals, each triggerednew_device+rssi_threshold. Likely transient peripherals at the edge of range. - Named devices disappeared: Gatto (pet tracker) and Keys ES1 both in the bulk disappearance group (last seen ~3203s ago). These are known devices that should be persistent — their absence may warrant attention if they don’t reappear by next heartbeat.

Action items to watch

- If Gatto and Keys ES1 remain disappeared next cycle, escalate as potential real-world concern (tracker/keys out of range).

- The recurring bulk-disappearance pattern (~3200s gap) may indicate a monitoring rule that’s too aggressive with its timeout threshold.

All 23 alerts acknowledged. Monitoring continues next heartbeat.

Cycle 3: 28 alerts. Gatto and Keys ES1 absent for 3 consecutive cycles. Analyst escalates: “These are known persistent devices. Their prolonged absence may indicate a real-world concern — out of range, powered off, or lost.”

Full Analyst report — Cycle 3

Alert Review — Heartbeat #3

28 unacknowledged alerts reviewed and acknowledged.

Time range: 23:18:32–23:21:22 UTC (2026-03-28)

Breakdown by rule

| Rule | Count |

|---|---|

device_disappeared |

21 |

rssi_threshold |

5 |

new_device |

2 |

Patterns observed

- Bulk disappearance at 23:18:32 (16 devices): Mix of stale gaps (3273–4745s, i.e. 54–79 min) and shorter gaps (622–692s). The long-gap devices are the same stale-disappearance pattern seen in Heartbeats #1 and #2 — likely the rule engine re-triggering on already-departed devices rather than new departures.

- Gatto and Keys ES1 still missing: Both flagged in Heartbeat #2 as “watch next cycle.” Now confirmed absent for a third consecutive heartbeat (last seen ~54 min ago as of 23:18:32). Escalation recommended — these are known persistent devices (pet tracker and key finder) and their prolonged absence may indicate a real-world concern (out of range, powered off, or lost).

- Repeat offenders:

CA42B8A8and3AA3885Eagain triggered bothrssi_thresholdanddevice_disappeared— same weak-signal transient pattern from all three heartbeats. These are fringe-range devices cycling in and out. - Recent transient disappearances at 23:20:12–23:21:22: 5 unknown devices with 301s gaps — these appeared briefly and vanished quickly. Typical transient BLE advertisements.

- Two new devices with decent signal:

6881DED0(RSSI -59) at 23:18:42 andD2A2B4E2(RSSI -64) at 23:19:52. Both moderate signal strength, could be real devices entering proximity. - Weak signal alerts: 5 devices below -90 dBm threshold —

8A0D57DE(-102),4D6C3630(-98),CA42B8A8(-97),3AA3885E(-96),S1797e79bb6f5ff4cC(-93). All at the extreme edge of scanner range.

Escalation: Gatto & Keys ES1

⚠️ Gatto (pet tracker) and Keys ES1 (key finder) have been absent for 3 consecutive heartbeats (~6+ minutes of monitoring, last seen 54+ min ago). If these devices are expected to be in range, this warrants attention.

All 28 alerts acknowledged. Monitoring continues next heartbeat.

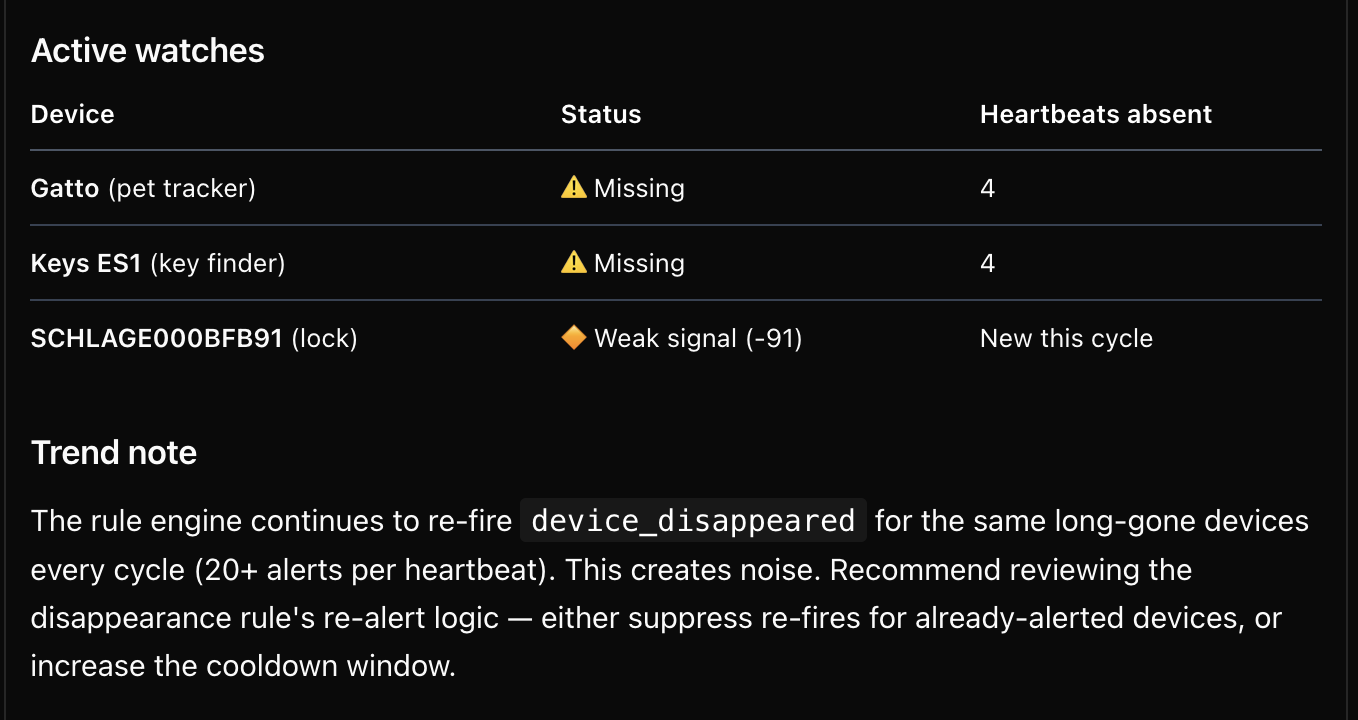

Cycle 4: 32 alerts. Gatto absent for 4th cycle. Analyst also flags Schlage lock weak signal (-91 dBm): “Fixed infrastructure device. Weak signal is unusual — could indicate interference, low battery, or physical obstruction.”

The Analyst also recommended system improvements: “The rule engine continues to re-fire… Recommend reviewing the disappearance rule’s re-alert logic…”

Full Analyst report — Cycle 4

Alert Review — Heartbeat #4

32 unacknowledged alerts reviewed and acknowledged.

Time range: 23:22:52–23:25:42 UTC (2026-03-28)

Breakdown by rule

| Rule | Count |

|---|---|

device_disappeared |

24 |

rssi_threshold |

7 |

new_device |

1 |

Patterns observed

- Bulk disappearance at 23:22:52 (22 devices): Another rule-engine sweep with the same stale-gap pattern. Long gaps (3533–5005s, ~59–83 min) dominate — these are the same departed devices being re-triggered every cycle.

- Gatto and Keys ES1 — 4th consecutive heartbeat absent: Both now last seen ~59 min ago (3533s gap at 23:22:52). Escalation remains active. These devices have not reappeared since the initial bulk disappearance in Heartbeat #1.

- New: Schlage lock weak signal ⚠️:

SCHLAGE000BFB91(C456A936) triggeredrssi_thresholdat RSSI -91. This is a fixed infrastructure device (smart door lock) — weak signal is unusual and could indicate interference, low battery, or physical obstruction. Worth monitoring. - Repeat fringe devices:

CA42B8A8(-97),3AA3885E(-96),4D6C3630(-98),8A0D57DE(-95) continue their cycle of appear/weak-signal/disappear every heartbeat. Stable pattern, no action needed. - New device:

29E0DDDE(RSSI -96) — very weak signal, likely a passing transient. - Recent transient disappearances: 3 devices at 23:23:42–23:24:52 with 301s gaps — short-lived BLE advertisements.

Active watches

| Device | Status | Heartbeats absent |

|---|---|---|

| Gatto (pet tracker) | ⚠️ Missing | 4 |

| Keys ES1 (key finder) | ⚠️ Missing | 4 |

| SCHLAGE000BFB91 (lock) | 🔶 Weak signal (-91) | New this cycle |

Trend note

The rule engine continues to re-fire device_disappeared for the same long-gone devices every cycle (20+ alerts per heartbeat). This creates noise. Recommend reviewing the disappearance rule’s re-alert logic — either suppress re-fires for already-alerted devices, or increase the cooldown window.

All 32 alerts acknowledged. Monitoring continues next heartbeat.

Safety and guardrails

I didn’t set any guardrails for this demo. No rate limits, no alert suppression, no noise filtering. The Analyst figured out on its own that the alerter plugin was re-firing the same alerts every cycle, called it noise, and recommended fixing the re-alert logic.

A guardrail I never wrote, discovered by an agent that was just doing its job.

It also points at a real question: an agent that controls physical systems needs constraints. Someone will ask — “didn’t you just replace one set of rules (the automation) with another (the constraints)?”

Not quite. The rules moved up a level. The old rules were implementation-level: if device_count > threshold, then alert; specific instructions for a specific scenario. Guardrails are intent-level: don’t flood the operator with noise, a principle the agent applies across every scenario it encounters.

In practice you’d want both — hard limits on the control surface (rate limits, write opt-in, destructive action confirmation) and intent-level guidance that the agent reasons about.

This is a version of what I called the governor module problem in an earlier post. Every time we’ve delegated more to machines (compilers, cloud infrastructure, CI/CD), the control plane moved up a layer. We didn’t lose control. We moved where it lives.

That’s still unsolved for physical control. But the fact that the Analyst independently identified a noise problem and recommended a fix — without being told to — suggests the capability is already emerging. The question is whether we can build infrastructure robust enough to trust it.

Closing thought

This post is about a different kind of problem. Not “help me build this” but “watch this and tell me when something matters.” The agent isn’t assisting a human at a terminal. It’s running autonomously, making judgment calls about the physical world.

The pattern isn’t specific to BLE. Anywhere you have sensors generating data and dashboards displaying it, there’s room for an agent that actually does something about it.

Nobody should rip out their working automations for this — today. Rules handle known scenarios well. They’re fast, cheap, and predictable. But the world isn’t all known scenarios. The question isn’t whether agents can replace rules. It’s what becomes possible when the automation layer can handle situations nobody thought to write a rule for. And as models get faster, cheaper, and more trustworthy — maybe the rules do go away, and all that’s left is intent and guardrails.

But for now, the gap between “imagine if…” and “let me show you” is small enough to close in a weekend project.